Learning-style recognition from eye-hand movement using a dynamic Bayesian network

Eun-Sol Kim Computer Science and Engineering Seoul National University Seoul 151-744 eskim@bi.snu.ac.kr

Yung-Kyun Noh Mechanical and Aerospace Engineering Seoul National University Seoul 151-744 yungkyun.noh@gmail.com

Byoung-Tak Zhang Computer Science and Engineering Cognitive Science and Brain Science Programs 2 Seoul National University Seoul 151-744 btzhang@bi.snu.ac.kr

Educators and psychologists have debated on the efficacy of personalizing teaching methods according to learning style [1-3]. Recently, the styles are obtained from learning models, and it has been shown that utilizing those styles leverages educational effect. However, the researcher uses only two features which are the classification accuracy of human and response time, and it has been suggested that these two simple features are insufficient to characterize the complex learning styles of human [4,5]. In this work, we propose two additional features that help dramatically improve characterizing the discriminating property of people having different learning styles.

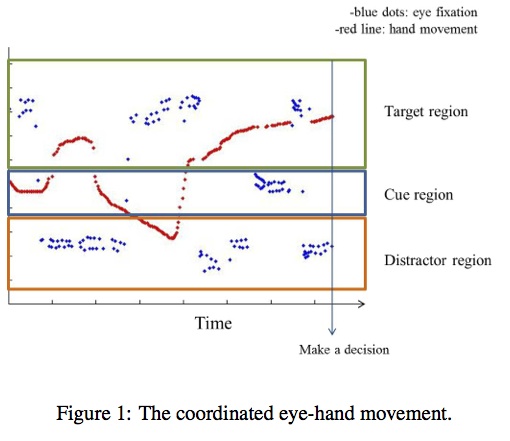

The features we propose are the coordinated eye and hand movements. For the eye movement, we used the coordinate information from an eye tracker (Arrington research, BS007, binocular) obtained during the psychological task. For the hand movement, data are obtained from mouse-movement detecting software as an approximate, and the sensor data are converted into the coordinate of Cartesian. We used machine learning algorithms to characterize the learning styles of different people.

Embodiment sensor data have been widely used to figure out the cognitive characteristics of human in psychological experiments, especially, regarding the cognitive processes of learning [6]. For example, eye movement has been used to find out the process of human language and vision processing as well as attention [7-9]. Hand movement data, which is often used in recent psychological experiments, provides the evolving process of decision [10-12]. In these works, the confidence and understanding stage of the answer can be recognized by the shape of the hand movement trajectory.

In our work, we designed a game for foreign language learning (specifically English learning for Korean students) which is called MMG (Multimodal Memory Game)[13]. The game consists of two phases: watching a TV drama and playing to recall scenes of the drama. In this specific experiment, all of the students viewed an episode of sitcom ’Friends’. The students are required to remember the drama with two kinds of tasks. One is to choose the corresponding image (text) when a text (image) is given as a cue. The other one is to choose the next image (text) when a previous step’s image (text) is given. With this game, we can test the memory ability of the student.

In a previous study [14], we analyzed cognitive characteristics of the user from the integrated information in MMG. The dynamics for a decision and the reasoning process can be presented with the coordinated information (Figure 1). This result can be applied to recognize the personal learning styles. We designed a simple dynamic Bayesian network to automatically recognize the learning style. In the model, one node represents the eye-gaze point and the other node represents the hand movement. Those nodes are observed every step, and the hidden node, which presents the decision stage, is updated. From the combination of the inferred decision stage from a dynamic Bayesian networks, the accuracy and the response time, we can recognize a personal learning style. As a result, learning styles from this method is similar to Grasha’s model [15], and we expect that effective feedback can be provided based on this method with more study.

Acknowledgments

This work is supported in part by the National Research Foundation (NRF) under Grant 2011-0016483-Videome.

References

[1] Kolb, D. (1984) Experiential learning: Experience as the source of learning and development. Englewood Cliffs, NJ: Prentice-Hall.

[2] Leite, W.L., Svinicki, M. & Shi, Y.S. (2009) Attempted Validation of the Scores of the VARK: Learning Styles Inventory With MultitraitMultimethod Confirmatory Factor Analysis Models. Educational and psychological measurement 70(2), pp. 609-616.

[3] Pashler, H., McDaniel, M., Rohrer, D. & Bjork, R. (2008) Learning styles: Concepts and evidence. Psychological Science in the Public Interest 9, pp.105-119.

[4] Holden, C. (2010) Learning with Style. Science 327(5692), pp.129.

[5] Glenn, D. (2009) Matching Teaching Style to Learning Style May Not Help Students. The chronicle of higher education, December 15.

[6] Ballard, D. H., Hayhoe, M. M., Pook, P. K. & Rao, R. P. N. (1997) Deictic codes for the embodiment of cognition. Behavioral and Brain Sciences 20, pp.723-767.

[7] Just, M. A. & Carpenter, P. A. (1976) Eye fixations and cognitive processes. Cognitive Psychology 8, pp.441-480.

[8] Rayner, K. (1998) Eye movements in reading and information processing: 20 years of research. Psychological Bulletin 124(3), pp.372-422.

[9] Poole, A. & Ball, L. J. (2006) Eye tracking in human-computer interaction and usability research: current status and future prospects. Encyclopedia of human computer interaction, pp.211-219.

[10] Spivey, M. (2007) The Continuity of Mind. Oxford University Press.

[11] Cox, A.L. & Silva, M.M. (2006) The role of mouse movements in interactive search. Proceedings of the 28th Annual Meeting of the Cognitive Science Society, pp.1156-1161.

[12] Song, J.H. & Nakayama, K. (2006). Hidden cognitive states revealed in choice reaching tasks. Trends in Cognitive Science 13, pp.360-366.

[13] Zhang, B.-T. (2009). Teaching an agent by playing a multimodal memory game: challenges for machine learners and human teachers, AAAI 2009 Spring Symposium: Agents that Learn from Human Teachers, pp. 144-149.

[14] Kim, E.-S., Kim, J., Pfeiffer, T., Wachsmuth, I. & Zhang, B.-T. (2012) ’Is this right?’ or ’Is that wrong?’: Evidence from dynamic eye-hand movement in decision making, Proceedings of Annual Meeting of the Cognitive Science Society (CogSci 2012), pp. 2723.

[15] Grasha, A. (1996) Teaching with style. Pittsburg, PA:Alliance Publishers